What is a codec? Let’s first say what it isn’t.

A codec isn’t a video format. To use an analogy: if a codec is the language a letter is written, then the format is the envelope that the letter is carried in. That is why the same codecs can often live happily within many different formats. Video formats are represented by the extension on your video file:

- Quicktime (.mov)

- MP4 (.mp4)

- Window Media Video (.wmv)

So back to codecs – what are they exactly? A codec is a method of compressing visual information in a video file. If we defined the color of every pixel in a video, the file size would be enormous (nearly 4 gigs per minute for 720p video.) We can do the math:

1280 pixels wide x

720 pixels tall x

24 bits of color info per pixel (8 for red, 8 for green, 8 for blue) x

24 frames per second x

60 seconds per minute =

31,850,496,000 bits or

3.98 gigabytes.

Since this is a difficult size to deal with, computer scientists have devised codecs to reduce file sizes. (Codec stands for COmpressor-DECompressor.)

Here are some common codecs:

- H.264

- Animation

- ProRes

- Sorenson (older)

What Is The Best Codec To Use?

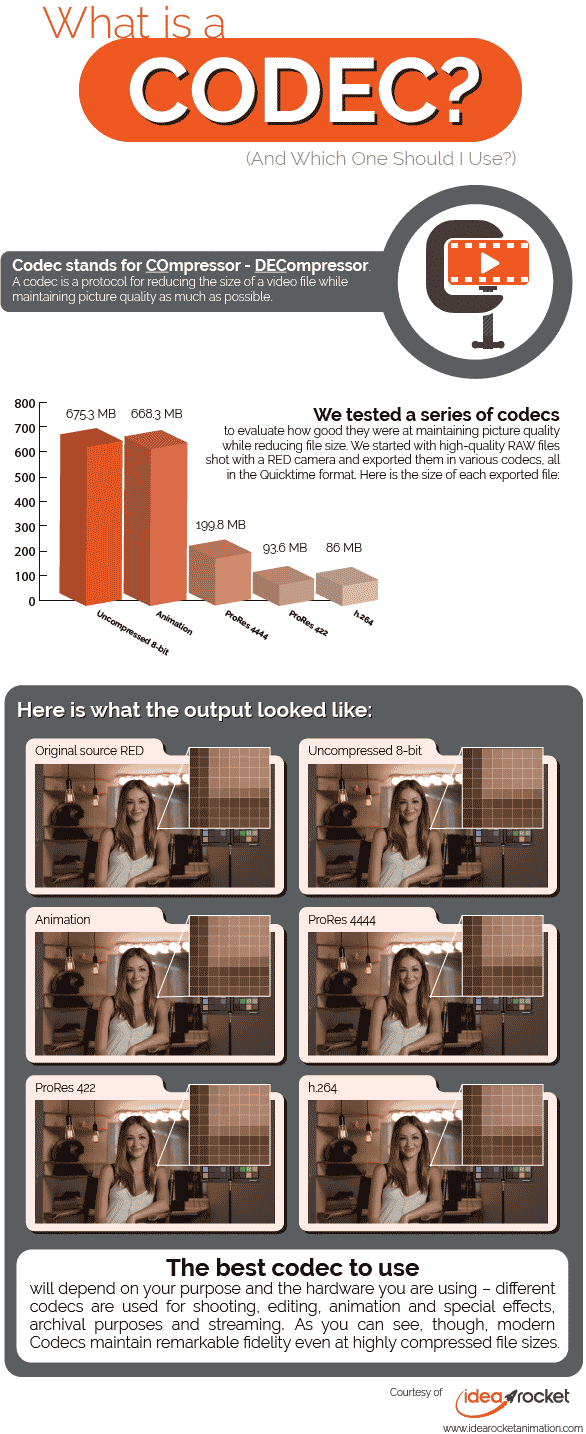

The answer to this question will depend on what your purpose is. Different codecs are used at different places in the video-making/distributing process. In the infographic below, we are testing these codecs:

- Shooting (RAW)

- Editing (Pro Res 4:2:2 and Uncompressed 10-bit)

- Animation and Special Effects (Animation)

- Mastering and archiving (Pro Res 4:4:4)

- Streaming or Distributing (H.264)

Let’s talk about some of these.

Animation: This is a lossless codec, meaning there is absolutely no degradation of quality. The only sort of compression it uses is Run-Length Encoding. (More on that in a bit.) It is a codec that is usually only used in 2d or 3d animation production, or in special effects. Since it depends on continuous areas of color for its compression power, it is not very reductive of file size when used for live action.

H.264: This was a revolutionary codec when it came out in 2003. The dominant codec before then was Sorenson, and H.264 improved both picture quality and compression immensely, making possible the explosion of video content that came in the mid-aughts. Most of the video you see online still uses this codec. We usually deliver the explainer videos we make using .mp4 and H.264. The quality is so high that it is even accepted for many broadcast deliveries!

ProRes: This is an Apple-branded codec that was built to use in Final Cut Pro, but has stuck around after FCP was sidelined. It comes in a great many different flavors for different application like editing (4:2:2) or mastering/archiving (4:4:4).

Uncompressed 8-bit or 10-bit: This is one of video’s great misnomers. Uncompressed video is actually compressed using Chrome Sub-Sampling! (More on that in the next section.) It is a codec used for editing.

So what is the best codec to use? Usually you are best off going with the recommended codec of your chosen hardware (or the specs of your video host, if you are uploading something to stream.)

How Do Codecs Work?

Codecs use different techniques to reduce file size.

Run-Length Encoding: Say you have a big patch of your video image that is the same color. It would not be economical to repeat the color information for every pixel in the frame. Rather, it would make more sense to say “this pixel is x color, and the following 35 pixels are the same color too.” An advantage of this method is that it is lossless, meaning no picture quality is lost at all.

Bit Depth: Usually in video we use 8 bits (or 8 binary numbers) to define a color. That results in 256 gradations of color, which usually gives us a pretty good image, but if we want more definition we can apply 10 bits to the color of each pixel (1,024 gradations.) This is what the 10-bit Uncompressed video does, and it actually makes the file a bit bigger. Likewise, we can reduce file size by communicating color information with fewer binary slots than 8. The tell-tale sign that a file is being compressed this way is that we start to see banding on the gradated areas of the image.

Chroma Sub-sampling: Our eyes are designed to be very sensitive to brightness, but not that sensitive to color. (In our retinas we have nearly 20 times the number of brightness-seeing Rod photoreceptors as color-seeing Cone receptors.) Codecs can take advantage of this human characteristic by keeping brightness information but throwing away some color information.

Rather than expressing a color value with three numbers representing colors (Red, Green, Blue,) we can express a color value as Y’CbCr (Brightness, Blue, Red) and derive the missing color (green) by math, since brightness is just a combination of the three primaries. The power of this is that it lets us make the color information chunkier – one pixel of information may represent two or four pixels in the image. Because the brightness is being varied for every pixel, however, the eye has a very hard time spotting the difference.

When we see three numbers separated by colons, as we do with the Pro Res codecs below, they represent how much information is being applied to the Brightness-Blue-Red channels. For instance, a 4:4:4 codec would have no Chroma Sub-sampling; brightness and color information is provided for every pixel. The Pro Res 4444 is a 4:4:4 codec; the additional 4 refers to the alpha channel (transparency information) that is sometimes used with this codec.

In a 4:2:2 codec (such as the Pro Res 422 we have tested below) every pixel has brightness information, but out of every four pixels we only have two pixels of color information in the blue and red channels. This process can reduce file sizes considerably and be barely perceptible.

Spatial compression: We have talked about how Run-Length Encoding can bunch together the same color and indicate that it is being repeated. Spatial Compression is like that, but it fudges a little bit. If two colors are similar, then it reproduces them as being the same. This can cause the same sort of banding that you see in low bit-depth images.

Temporal compression: Imagine a talking heads shot. On camera, we have a person talking and moving around a bit, but if the camera is on a tripod, the background isn’t moving. Why repeat the image information for the background areas if you can say “let’s repeat these patches of the image that don’t move for the next 50 frames.” When a Codec does this, it is applying Temporal Compression.

We have talked about five different techniques for reducing file size:

- Run-Length Encoding

- Bit Depth

- Chroma Sub-sampling

- Spatial Compression, and

- Temporal Compression

While these are common ways Codecs reduce file size while maximizing quality, many of the most effective codecs use a combination of these methods, or others we have not broached.

What Is Bit Rate?

Finally, let’s talk about a measure of how much a Codec has compressed a video clip: the bit rate, usually expressed as Data Amount/Second. A Codec that outputs at 8 Mbps would give us a file that is 8 megabits large (or 1 megabyte, to use a more common measure) for every second of video.

A mistake that many people make is to confuse bit rate with quality. Some codecs can produce excellent images at the same bit rate that would produce a chunky mess in older, inferior codecs.

If you are rendering out a video, your chosen Codec will often ask you what bit rate you would like to output at. When you set it, you should remember that codecs nearly always have a sweet range where they are producing the best images at the smallest file sizes. Larger bit rates usually produce only very marginal improvement, while smaller bit rates can quickly deteriorate the image. Experimentation (or just experience) will tell you where a Codec’s sweet range is.

If you’re interested in producing a video, reach out and a member of our team will get back to you soon. We make videos for a wide range of uses such as healthcare and employee-facing videos, among others.